Fully Automated Insults to Life Itself

In 20 years time, we’re going to be talking about “generative AI”, in the same tone of voice we currently use to talk about asbestos. A bad idea that initially seemed promising which ultimately caused far more harm than good, and that left a swathe of deeply embedded pollution across the landscape that we’re still cleaning up.

It’s the final apotheosis of three decades of valuing STEM over the Humanities, in parallel with the broader tech industry being gutted and replaced by a string of venture-backed pyramid schemes, casinos, and outright cons.

The entire technology is utterly without value and needs to be scrapped, legislated out of existence, and the people involved need to be forcibly invited to find something better to send their time on. We’ve spent decades operating under the unspoken assumption that just because we can build something, that means it’s inevitable and we have to build it first before someone else does. It’s time to knock that off, and start asking better questions.

AI is the ultimate form of the joke about the restaurant where the food is terrible and also the portions are too small. The technology has two core problems, both of which are intractable:

- The output is terrible

- It’s deeply, fundamentally unethical

Probably the definite article on generative AI’s quality, or profound lack thereof, is Ted Chiang’s ChatGPT Is a Blurry JPEG of the Web; that’s almost a year old now, and everything that’s happened in 2023 has only underscored his points. Fundamentally, we’re not talking about vast cyber-intelligences, we’re talking Sparkling Autocorrect.

Let me provide a personal anecdote.

Earlier this year, a coworker of mine was working on some documentation, and had worked up a fairly detailed outline of what needed to be covered. As an experiment, he fed that outline into ChatGPT, intended to publish the output, and I offered to look over the result.

At first glance it was fine. Digging in, thought, it wasn’t great. It wasn’t terrible either—nothing in it was technically incorrect, but it had the quality of a high school book report written by someone who had only read the back cover. Or like documentation written by a tech writer who had a detailed outline they didn’t understand and a word count to hit? It repeated itself, it used far too many words to cover very little ground. It was, for lack of a better word, just kind of a “glurge”. Just room-temperature tepidarium generic garbage.

I started to jot down some editing notes, as you do, and found that I would stare at a sentence, then the whole paragraph, before crossing the paragraph out and writing “rephrase” in the margin. To try and be actually productive, I took a section and started to rewrite in what I thought was better, more concise manner—removing duplicates, omitting needless words. De-glurgifying.

Of course, I discovered I had essentially reconstituted the outline.

I called my friend back and found the most professional possible way to tell him he needed to scrap the whole thing start over.

It left me with a strange feeling, that we had this tool that could instantly generate a couple thousand words of worthless text that at first glance seemed to pass muster. Which is so, so much worse than something written by a junior tech writer who doesn’t understand the subject, because this was produced by something that you can’t talk to, you can’t coach, that will never learn.

On a pretty regular basis this year, someone would pop up and say something along the lines of “I didn’t know the answer, and the docs were bad, so I asked the robot and it wrote the code for me!” and then they would post some screenshots of ChatGPTs output full of a terribly wrong answer. Human’s AI pin demo was full of wrong answers, for heaven’s sake. And so we get this trend where ChatGPT manages to be an expert in things you know nothing about, but a moron about things you’re an expert in. I’m baffled by the responses to the GPT-n “search” “results”; they’re universally terrible and wrong.

And this is all baked in to the technology! It’s a very, very fancy set of pattern recognition based on a huge corpus of (mostly stolen?) text, computing the most probable next word, but not in any way considering if the answer might be correct. Because it has no way to, thats totally outside the bounds of what the system can achieve.

A year and a bit later, and the web is absolutely drowning in AI glurge. Clarkesworld had to suspend submissions for a while to get a handle on blocking the tide of AI garbage. Page after page of fake content with fake images, content no one ever wrote and only meant for other robots to read. Fake articles. Lists of things that don’t exist, recipes no one has ever cooked.

And we were already drowning in “AI” “machine learning” gludge, and it all sucks. The autocorrect on my phone got so bad when they went from the hard-coded list to the ML one that I had to turn it off. Google’s search results are terrible. The “we found this answer for you” thing at the top of the search results are terrible.

It’s bad, and bad by design, it can’t ever be more than a thoughtless mashup of material it pulled in. Or even worse, it’s not wrong so much as it’s all bullshit. Not outright lies, but vaguely truthy-shaped “content”, freely mixing copied facts with pure fiction, speech intended to persuade without regard for truth: Bullshit.

Every generated image would have been better and funnier if you gave the prompt to a real artist. But that would cost money—and that’s not even the problem, the problem is that would take time. Can’t we just have the computer kick something out now? Something that looks good enough from a distance? If I don’t count the fingers?

My question, though, is this: what future do these people want to live in? Is it really this? Swimming a sea of glurge? Just endless mechanized bullshit flooding every corner of the Web?Who looked at the state of the world here in the Twenties and thought “what the world needs right now is a way to generate Infinite Bullshit”?

Of course, the fact that the results are terrible-but-occasionally-fascinating obscure the deeper issue: It’s a massive plagiarism machine.

Thanks to copyleft and free & open source, the tech industry has a pretty comprehensive—if idiosyncratic—understanding of copyright, fair use, and licensing. But that’s the wrong model. This isn’t about “fair use” or “transformative works”, this is about Plagiarism.

This is a real “humanities and the liberal arts vs technology” moment, because STEM really has no concept of plagiarism. Copying and pasting from the web is a legit way to do your job.

(I mean, stop and think about that for a second. There’s no other industry on earth where copying other people’s work verbatim into your own is a widely accepted technique. We had a sign up a few jobs back that read “Expert level copy and paste from stack overflow” and people would point at it when other people had questions about how to solve a problem!)

We have this massive cultural disconnect that would be interesting or funny if it wasn’t causing so much ruin. This feels like nothing so much as the end result of valuing STEM over the Humanities and Liberal Arts in education for the last few decades. Maybe we should have made sure all those kids we told to “learn to code” also had some, you know, ethics? Maybe had read a couple of books written since they turned fourteen?

So we land in a place where a bunch of people convinced they’re the princes of the universe have sucked up everything written on the internet and built a giant machine for laundering plagiarism; regurgitating and shuffling the content they didn’t ask permission to use. There’s a whole end-state libertarian angle here too; just because it’s not explicitly illegal, that means it’s okay to do it, ethics or morals be damned.

“It’s fair use!” Then the hell with fair use. I’d hate to lose the wayback machine, but even that respects robots.txt.

I used to be a hard core open source, public domain, fair use guy, but then the worst people alive taught a bunch of if-statements to make unreadable counterfit Calvin & Hobbes comics, and now I’m ready to join the Butlerian Jihad.

Why should I bother reading something that no one bothered to write?

Why should I bother looking at a picure that no one could be bothered to draw?

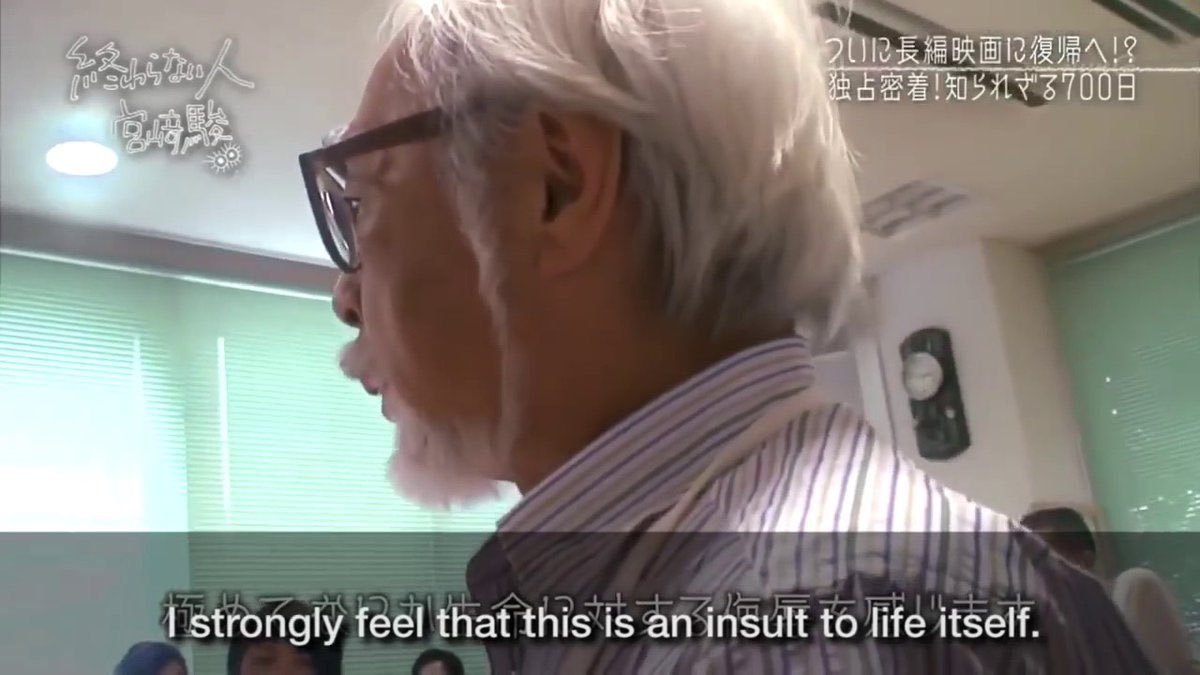

Generative AI and it’s ilk are the final apotheosis of the people who started calling art “content”, and meant it.

These are people who think art or creativity are fundamentally a trick, a confidence game. They don’t believe or understand that art can be about something. They reject utter the concept of “about-ness”, the basic concept of “theme” is utterly beyond comprehension. The idea that art might contain anything other than its most surface qualities never crosses their mind. The sort of people who would say “Art should soothe, not distract”. Entirely about the surface aesthetic over anything.

(To put that another way, these are the same kind people who vote Republican but listen to Rage Against the Machine.)

Don’t respect or value creativity.

Don’t respect actual expertise.

Don’t understand why they can’t just have what someone else worked for. It’s even worse than wanting to pay for it, these creatures actually think they’re entitled to it for free because they know how to parse a JSON file. It feels like the final end-point of a certain flavor of free software thought: no one deserves to be paid for anything. A key cultual and conceptual point past “information wants to be free” and “everything is a remix”. Just a machine that endlessly spits out bad copies of other work.

They don’y understand that these are skills you can learn, you have to work at, become an expert in. Not one of these people who spend hours upon hours training models or crafting prompts ever considered using that time to learn how to draw. Because if someone else can do it, they should get access to that skill for free, with no compensation or even credit.

This is why those machine generated Calvin & Hobbes comics were such a shock last summer; anyone who had understood a single thing about Bill Watterson’s work would have understood that he’d be utterly opposed to something like that. It’s difficult to fathom someone who liked the strip enough to do the work to train up a model to generate new ones while still not understanding what it was about.

“Consent” doesn’t even come up. These are not people you should leave your drink uncovered around.

But then you combine all that with the fact that we have a whole industry of neo-philes, desperate to work on something New and Important, terrified their work might have no value.

(See also: the number of abandoned javascript frameworks that re-solve all the problems that have already been solved.)

As a result, tech has an ongoing issue with cool technology that’s a solution in search of a problem, but ultimately is only good for some kind of grift. The classical examples here are the blockchain, bitcoin, NFTs. But the list is endless: so-called “4th generation languages”, “rational rose”, the CueCat, basically anything that ever got put on the cover of Wired.

My go-to example is usually bittorrent, which seemed really exciting at first, but turned out to only be good at acquiring TV shows that hadn’t aired in the US yet. (As they say, “If you want to know how to use bittorrent, ask a Doctor Who fan.”)

And now generative AI.

There’s that scene at the end of Fargo, where Frances McDormand is scolding The Shoveler for “all this for such a tiny amount of money”, and thats how I keep thinking about the AI grift carnival. So much stupid collateral damage we’re gonna be cleaning up for years, and it’s not like any of them are going to get Fuck You(tm) rich. No one is buying an island or founding a university here, this is all so some tech bros can buy the deluxe package on their next SUV. At least crypto got some people rich, and was just those dorks milking each other; here we all gotta deal with the pollution.

But this feels weirdly personal in a way the dunning-krugerrands were not. How on earth did we end up in a place where we automated art, but not making fast food, or some other minimum wage, minimum respect job?

For a while I thought this was something along one of the asides in David Graeber’s Bullshit Jobs, where people with meaningless jobs hate it when other people have meaningful ones. The phenomenon of “If we have to work crappy jobs, we want to pull everyone down to our level, not pull everyone up”. See also: “waffle house workers shouldn’t make 25 bucks an hour”, “state workers should have to work like a dog for that pension”, etc.

But no, these are not people with “bullshit jobs”, these are upper-middle class, incredibly comfortable tech bros pulling down a half a million dollars a year. They just don’t believe creativity is real.

But because all that apparently isn’t fulfilling enough, they make up ghost stories about how their stochastic parrots are going to come alive and conquer the world, how we have to build good ones to fight the bad ones, but they can’t be stopped because it’s inevitable. Breathless article after article about whistleblowers worried about how dangerous it all is.

Just the self-declared best minds of our generation failing the mirror test over and over again.

This is usually where someone says something about how this isn’t a problem and we can all learn to be “prompt engineers”, or “advisors”. The people trying to become a prompt advisor are the same sort who would be proud they convinced Immortan Joe to strap them to the back of the car instead of the front.

This isn’t about computers, or technology, or “the future”, or the inevitability of change, or the march or progress. This is about what we value as a culture. What do we want?

“Thus did a handful of rapacious citizens come to control all that was worth controlling in America. Thus was the savage and stupid and entirely inappropriate and unnecessary and humorless American class system created. Honest, industrious, peaceful citizens were classed as bloodsuckers, if they asked to be paid a living wage. And they saw that praise was reserved henceforth for those who devised means of getting paid enormously for committing crimes against which no laws had been passed. Thus the American dream turned belly up, turned green, bobbed to the scummy surface of cupidity unlimited, filled with gas, went bang in the noonday sun.” ― Kurt Vonnegut, God Bless You, Mr. Rosewater

At the start of the year, the dominant narrative was that AI was inevitable, this was how things are going, get on board or get left behind.

Thats… not quite how the year went?

AI was a centerpiece in both Hollywood strikes, and both the Writers and Actors basically ran the table, getting everything they asked for, and enshrining a set of protections from AI into a contract for the first time. Excuse me, not protection from AI, but protection from the sort of empty suits that would use it to undercut working writers and performers.

Publisher after publisher has been updating their guidelines to forbid AI art. A remarkable number of other places that support artists instituted guidlines to ban or curtail AI. Even Kickstarter, which plunged into the blockchain with both feet, seemed to have learned their lesson and rolled out some pretty stringent rules.

Oh! And there’s some actual high-powered lawsuits bearing down on the industry, not to mention investigations of, shall we say, “unsavory” material in the training sets?

The initial shine seems to be off, where last year was all about sharing goofy AI-generated garbage, there’s been a real shift in the air as everyone gets tired of it and starts pointing out that it sucks, actually. And that the people still boosting it all seem to have some kind of scam going. Oh, and in a lot of cases, it’s literally the same people who were hyping blockchain a year or two ago, and who seem to have found a new use for their warehouses full of GPUs.

One of the more heartening and interesting developments this year was the (long overdue) start of a re-evaluation of the Luddites. Despite the popular stereotype, they weren’t anti-technology, but anti-technology-being-used-to-disenfrancise-workers. This seems to be the year a lot of people sat up and said “hey, me too!”

AI isn’t the only reason “hot labor summer” rolled into “eternal labor september”, but it’s pretty high on the list.

Theres an argument thats sometimes made that we don’t have any way as a society to throw away a technology that already exists, but that’s not true. You can’t buy gasoline with lead in it, or hairspray with CFCs, and my late lamented McDLT vanished along with the Styrofoam that kept the hot side hot and the cold side cold.

And yes, asbestos made a bunch of people a lot of money and was very good at being children’s pyjamas that didn’t catch fire, as long as that child didn’t need to breathe as an adult.

But, we've never done that for software.

Back around the turn of the century, there was some argument around if cryptography software should be classified as a munition. The Feds wanted stronger export controls, and there was a contingent of technologists who thought, basically, “Hey, it might be neat if our compiler had first and second amendment protection”. Obviously, that didn’t happen. “You can’t regulate math! It’s free expression!”

I don’t have a fully developed argument on this, but I’ve never been able to shake the feeling like that was a mistake, that we all got conned while we thought we were winning.

Maybe some precedent for heavily “regulating math” would be really useful right about now.

Maybe we need to start making some.

There’s a persistant belief in computer science since computers were invented that brains are a really fancy powerful computer and if we can just figure out how to program them, intelligent robots are right around the corner.

Theres an analogy that floats around that says if the human mind is a bird, then AI will be a plane, flying, but very different application of the same principals.

The human mind is not a computer.

At best, AI is a paper airplane. Sometimes a very fancy one! With nice paper and stickers and tricky folds! Byt the key is that a hand has to throw it.

The act of a person looking at bunch of art and trying to build their own skills is fundamentally different than a software pattern recognition algorithm drawing a picture from pieces of other ones.

Anyone who claims otherwise has no concept of creativity other than as an abstract concept. The creative impulse is fundamental to the human condition. Everyone has it. In some people it’s repressed, or withered, or undeveloped, but it’s always there.

Back in the early days of the pandemic, people posted all these stories about the “crazy stuff they were making!” It wasn’t crazy, that was just the urge to create, it’s always there, and capitalism finally got quiet enough that you could hear it.

“Making Art” is what humans do. The rest of society is there so we stay alive long enough to do so. It’s not the part we need to automate away so we can spend more time delivering value to the shareholders.

AI isn’t going to turn into skynet and take over the world. There won’t be killer robots coming for your life, or your job, or your kids.

However, the sort of soulless goons who thought it was a good idea to computer automate “writing poetry” before “fixing plumbing” are absolutely coming to take away your job, turn you into a gig worker, replace whoever they can with a chatbot, keep all the money for themselves.

I can’t think of anything more profoundly evil than trying to automate creativity and leaving humans to do the grunt manual labor.

Fuck those people. And fuck everyone who ever enabled them.